Editor’s Note: Say a welcome to Ardon, who is bringing some much-needed hard data to answer some of the more difficult questions we face in the game. I think you’ll like this piece, so enjoy! – Corbin

One of the great appeals of Magic is that it tests our skills. But powerful cards cost more money, which leads to some awkward tension: did we win because we outmaneuvered our opponents, or did we simply outspend them? Are we becoming better players, or just more invested? The idea of “pay-to-win” is Magic’s biggest elephant creature token in the room. I’m a graduate student, so I thought, why not collect data? I found evidence that money influences results, but not in the way I expected. As a result, I think we should pay less attention to win percentage, and focus instead on consistency.

For context, some amount of pay-to-win is not unique to Magic. Many sports and hobbies use equipment, and that allows for some financial advantage. I would guess that games exist on a spectrum: at one end, chess is incredibly pure. The best chess players in the world use the same pieces, and there’s no advantage to artisanally carved golden queens. On the other end of the spectrum, car racing probably depends heavily on the best equipment. Golf clubs can cost $600 – $1,200, and the best modern decks are easily more. Is this a bad thing for an optional hobby? How much influence is too much? These are ethical and philosophical questions that we should debate as a community. But the extent to which money influences success in Magic is a scientific question that we can start to answer here.

Methods

I found top-8 decklists for 16 of the biggest paper Modern tournaments in 2015. I recorded the deck name, final result, and total paper price as of November 24, 2015, which is right after GP Pittsburgh; note that prices have changed since then. This resulted in 125 decks in the top 8. It’s a small data set, but enough to start making some graphs:

Insight #1: Position within the Top 8 has nothing to do with deck cost

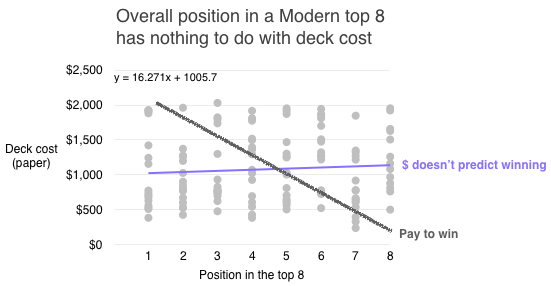

The first question I asked was can we predict how well a deck does in the top 8 based on its price? If Magic were perfectly pay-to-win, the dots would line up along the grey diagonal line: higher cost equals better results:

We see the opposite: a totally flat trend line, meaning deck cost does not predict winning. You can win with a $550 Merfolk deck (you’re welcome Corbin) just as easily as you can take 8th place with a $2,000 Jund.

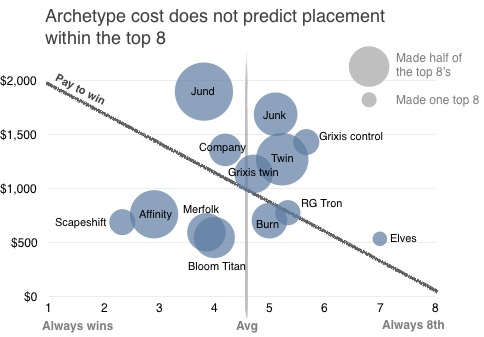

We get a more interesting picture if we cluster by deck archetype:

What this means: Here we can see some reasons why deck price doesn’t predict winning:

- Affinity and Scapeshift are most likely to win if they make the top 8, and they’re some of the cheapest. The fact that Scapeshift’s bubble is small means it doesn’t make top 8 that much, but when it does, it does very well.

- Jund is on top, meaning it’s the most expensive. Jund’s enormous size means it’s very likely to get to top 8, but once it gets there, it performs only slightly above average.

- Twin does not seem to be as dominant as some have claimed. Looking at Twin variants, we see that Grixis Twin costs less and performs better, but less consistently.

- Elves’ adorable little bubble means they rarely get to top 8, and being to the right means that once they do, they don’t do so well. I love Elves in legacy, but when it comes to modern, go home Elves.

The fact that Jund appeared in all of the top 8’s inspired me to replot by consistency, and the results stunned me:

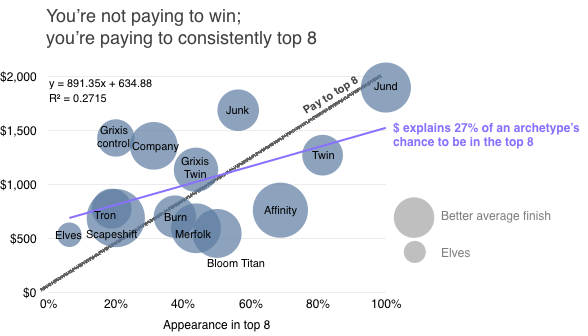

Insight #2: Magic isn’t pay to win; it’s pay to consistently top 8

Here the trend line in purple isn’t flat; it’s much closer to the “pay to top 8” line. This means that the cost of an archetype explains 27% of the chance of seeing that deck in the top 8. That’s not a perfect correlation, but it’s substantial. For comparison: your genes explain 20-40% of childhood IQ. Overall, on average, for every 10% increase in an archetype’s appearance in the top 8, you have to pay $89.

A few points here:

- Jund is the most expensive, and the most consistent; it always makes top 8 in the tournaments I looked at.

- Twin is the second most consistent, at 87%.

- Affinity punches above its weight class, making 73% of top 8’s, and doing very well once it gets there.

- Grixis control is the most overcosted – it’s way above the diagonal line.

- Go home Elves

Insight #3: Archetype ROI is complicated

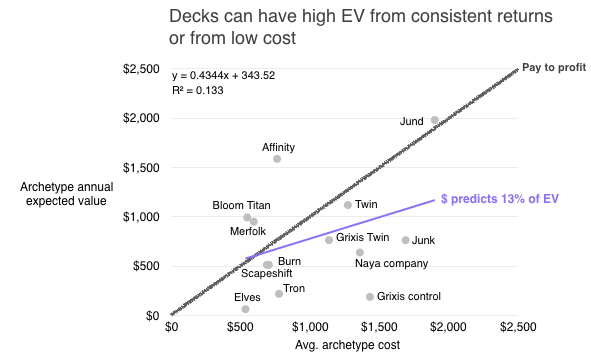

So if deck price predicts consistency in top 8 but not performance, what deck offers the best return on investment (ROI)? I took the GP prize structure and calculated each deck’s expected value as the average amount of money that archetype won over these 16 top 8’s. Then I calculated a predicted return on investment based on that archetype’s cost:

The grey diagonal line represents what pure pay-to-profit would look like. Purple is the actual trend of the data, showing that archetype cost predicts 13% of your expected value (EV).

Some points here:

- Overall, there’s a weak relationship between deck price and EV

- Jund and Affinity have high EV, but for different reasons: Jund is the peak of expensive and consistent, while Affinity overperforms as a cheap deck that can spike a tournament

- Jund has the highest EV, but also the highest cost, bringing down the ROI to 1 (see table)

- Affinity has the highest ROI, then Bloom Titan and Merfolk (you’re welcome Corbin)

- Grixis control is the most over-costed, and Junk lives up to its name

There’s an interesting tension here: expensive decks are more consistent, earning more prizes. But cheaper decks don’t need as many prizes to return your investment, at least financially speaking. Decks like Affinity, Bloom Titan, and Merfolk cost about the prize of a GP 12th place, and are fully capable of reaching a top 8 at these events, unlike Elves. Keep in mind this is an archetype’s success over time, not necessarily yours; your ability to take a tier 1 deck to the top 8 is a separate issue.

| Archetype | avg.cost | avg place | % top 8 | EV | ROI |

| Affinity | 763 | 2.9 | 69% | 1,588 | 2.1 |

| Bloom titan | 547 | 4.0 | 50% | 993 | 1.8 |

| Merfolk | 595 | 3.9 | 44% | 950 | 1.6 |

| Jund | 1,900 | 3.8 | 100% | 1,981 | 1.0 |

| Twin – UR | 1,273 | 5.2 | 81% | 1,119 | 0.9 |

| Scapeshift | 690 | 2.3 | 20% | 513 | 0.7 |

| Burn – naya | 703 | 5.0 | 38% | 513 | 0.7 |

| Twin – Grixis | 1,137 | 4.7 | 44% | 763 | 0.7 |

| Company | 1,359 | 4.2 | 31% | 638 | 0.5 |

| Junk | 1,690 | 5.1 | 56% | 763 | 0.5 |

| Tron – RG | 777 | 5.3 | 19% | 219 | 0.3 |

| Grixis control | 1,432 | 5.7 | 20% | 188 | 0.1 |

| Elves | 535 | 7.0 | 6% | 63 | 0.1 |

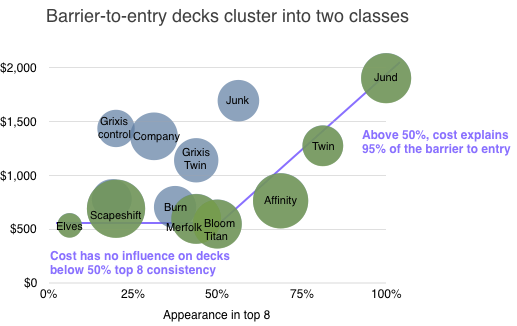

Insight #4: There are two distinct classes of Modern archetypes

This is a surprising finding. It started when I was looking at another way to approach the problem of pay-to-win by looking for the barrier to entry. What’s the minimum cost of each level of consistency?

- $380 is the absolute floor in 2015 – a mono-blue timewarp spiked Moscow RPTQ exactly once.

- $500 is the barrier to 50% consistency in the top 8, with Bloom Titan. This is a new archetype, and 2015 was particularly good for Amulet Bloom.

- $750 is the barrier to 70% consistency with Affinity

- $1,000 is the barrier to 80% consistency with UR Twin

- $1,900 is the barrier to the most consistency which in 2015 was Jund.

This trend strongly reinforces the pay-to-consistency hypothesis. You can do well with a cheap deck, just not consistently. Now check out the most cost-effective decks at each level of consistency:

This graph blew my mind right out of my head and into the chair behind me. Efficient archetypes – by which I mean the barriers to entry, the cheapest archetypes to reach that level of consistency – clearly fall into two distinct classes. Class 1 are below 50% consistency in a top 8. These decks show absolutely no correlation between cost and performance – their line is horizontal. Class 2 are the cheapest decks that are above 50% consistency in a top 8. These are incredibly correlated to cost, nearly 95%. So a $500 archetype can reach a top 8 anywhere from rarely up to a 50% consistency. Above 50%, it’s almost entirely pay-to-consistency, and for every 10% increase in consistency, you pay about $280.

There are several caveats here, and the most important is reverse causality: decks that do well become expensive. This makes the influence of money on performance hard to untangle, but in a future article I’ll look at how archetypes stabilize over time to get some clues.

If this is right, then the financial implications are that we can predict the market value of barrier-to-entry archetypes. If a new deck starts reaching top 8’s with 80% consistency, it will probably stabilize along this equation (and cost over $1,000). Certain cards in that deck will be due for a spike to soak up the missing value as more people compete to own the cheapest deck in that category. For example, Affinity is a little undercosted given how well it performs.

Some other caveats: We’re limited by only looking at the top 8; a better data set would be top 100, but it’s hard to find enough data in one place. If you know of better places, or want to collect it yourself, please get in touch. The small sample size hurts the most in figure 2: some decks appear in the top 8 only once, so it’s hard to draw conclusions on their performance.

What does this mean for the game?

Does Magic create a meritocratic world that rewards luck and skill? Or does it simply reflect privilege? We’ve seen that expensive decks are no guarantee of victory, and that a new archetype can spike a tournament. Budget wins are a long shot, but they’re legitimately there. That’s not a trivial accomplishment for a game that’s decades old, and a feature that’s probably maintained by Modern bannings. At the same time, it’s true that well-established archetypes in a trading card game are subject to economic forces; more consistent decks cost more. Magic is not a game where both players start equally. Yet this is one of Magic’s core innovations: every game of chess and Monopoly and Scrabble starts the same, but in Magic, you bring half of the board, and you have no idea what your opponent will bring. That innovation is part of what brings us variety, complexity, self-expression, and entire columns dedicated to the Science of Magic.

Fantastic article, thank you.

Great article, I love this sort of analysis. Modern is definitely more about playing what people consider to be the best deck at any given time; there are less players who stick to one archetype than in legacy, where you have the Joe Lossetts and Caleb scherers of the world. That being said, players in modern that stick to their decks like Tom Ross or Gerard Fabiano have had a lot of success. I would advocate playing what you enjoy unless the metagame is really at odds with it.

Just remember, the decks that made top 8 were most likely not piloted by the same person and were not exactly the same (just reminding the readers). maybe the next step would be to correlate it with amount of certain decks that made day 2 to see if the proportions of decks in day 2 mean anythig for top 8.

Interesting hypotheses. I wish there was more data to beef up the conclusions. I’m not surprised to see Jund at the top of the major paper tournaments; it’s a lot more difficult for “meta calls” to pay off when there’s 500-2000 people in the room. Jund and Twin is/was all-around solid choices for 15 rounds. As you said, it’s only natural for consistent decks to end up costing more money, though a single top-eight performance of a rogue deck is enough to spark buying frenzies these days.

That said, there were several cause-and-effect relationships with the metagame:

(A) Printing of Tasigur and Gurmag Angler enables decks to play a big threat while leaving up countermagic. Rise of Grixis Control (Winter/Spring 2015), Grixis Twin (Spring 2015), and Blue Jund (Summer).

(B) Printing of Kolaghan’s Command further pushed Grixis and also lead to a Jund resurgence over Abzan (Spring).

(C) Tron is good again! Community did not initially accept Ugin, but results from Super Standard League and SCG Invitational changed that perception (early Summer).

(D) Pro-Tour FRF = Pro Tour Amulet Bloom. Number of proficient pilots increased until ban, which warped format to include lots of Blood Moons and MD Spell Snare. Twin benefited from both and the BGx that survived the gauntlet benefited from the Twin numbers.

(E) Affinity is well-positioned mid-summer, leading to a resurgence. Numbers probably dropped from fear of Kolaghan’s Command, but it turns out not to matter so much.

(F) BFZ: printing of Bring to Light and Cinder Glade lead to a resurgence of Scapeshift. Printing of Ulamog fundamentally shifted Tron’s end-game. Printing of wasteland strangler, blight herder, and oblivion sower put eldrazi on the map.

Very well thought out and a welcome addition to the price team! Looks like I might try to go affinity!

Affinity confirmed best bang-for-buck in modern. Just be prepared to eat a lot of hate.

Concerning your 3rd “insight”:

You can’t just say that there is no correlation and subsequently make correlation claims.

That being said, what would be more interesting is breaking down these results in categories such as Aggro, Control and Combo and if you have more time into deeper categories or shared categories like control combo, aggro tempo, etc.

This will essentially make things more interesting in the sense that:

– Is aggro the cheapest and wins most?

– Is combo that oppressive?

– Is control that bad?

Those are good questions to know. So far we have both extremes in terms of all those archetypes:

– Grixis control isn’t doing well but costs a lot while Jund does very well and also costs a lot.

– Elves isn’t doing well either but affinity is.

And so, you can delve deeper into what is the proper approach to modern and what are the correct archetypes for what colors.

Good article.

Correlational coefficient on the graph plotting: “the more people that read this article, the more expensive Affinity will get” = +1.

HAhahAH

Great Article. Welcome, Ardon.

Another caveat not mentioned in the article is that top 8’ing a tournament is heavily affected by how many people are playing the deck, which is in turn heavily affected by two factors: price (a high price DECREASES the amount of people that will play that deck) and perceived strength (a high perceived strength INCREASES the amount of people playing that deck).

Good article.

Thanks for including your regression results in the graphs – I welcome this sort of complicated analysis. I’m a little disappointed the R-squared was so low on your initial graph, but it definitely helps to illustrate that although you can explain cost with consistency about 27% of the time, the other 73 percent is skill, luck, and other external factors.

Fantastic analysis and article, this one should be one for the books!

A great read! I love the graphs, and the insight and explanations of them.

I do have one big concern though – deck popularity wasn’t factored in. I realize this information might not be available, but I can’t help but think it has significance.

While Jund might have always put at least 1 copy in the top 8, that should be weighed against total population of Jund decks registered before round 1 begins. Always putting 1 copy in the top 8 is respectable no matter how we look at it, but the EV changes if Jund is 40% of the round 1 field vs 10% of the round 1 field.

Excellent article! Seems like the community semi discovered the value of affinity and pushed its price to the ev of the deck

Fantastic article. This makes me with there were a central database of decks from a large variety of tournaments, with cards, place, and winnings.

I know SCG has a decklist database that is fairly large. It might be worth talking to them about access.

Well done.

You still could say its pay for the best deck in the meta to win.

If you compare two jund players to each other, the one with enough funds to buy into an additional Zoo or Tron deck has much higher win chances!

Interesting, however without the #s of decks actually played at the tournaments, I think your methedology is flawed. While it might be true that simple top 8 numbers correlate with price, irrespective of actual tournament performance, I do not believe that is the case.

For an extreme example, say 90% of the field played Jund, and the rest of the top 8 decks each made up an even proportion of the remaining 10%. People playing Jund would have by far the most inconsistent deck at the tournament, with people playing other decks having a much greater likelihood to top 8 or win.

If you want to measure “consistency” I.e. The EV of playing a deck at a tournament, the measurement you need is the number of players on the archetype compared to the number who top 8.

Also, the correlation is flawed because modern card prices are not solely dictated by demand for modern decks. In particular, Jund’s price is inflated by legacy demand for goyfs, and the general under printing of that card.

That said, the simple number of times a deck shows up in standings may be an indicator of card prices since many people net deck and shops, players etc., tend to seek cards from decks that did well.